The 1990’s shopping spree for applications produced a spaghetti of links between databases and applications while also chipping away the petro professional’s effective time with manual data entry. Then, a wave of mega M&As hit the industry in late 90s early part of the 2000s.

Mega M&As (mergers and acquisitions) continued into the first part of the 2000s, bringing with them—at least for those on the acquiring side – a new level of data management complexity.

With mega M&As, the acquiring companies inherit more databases and systems, and many physical boxes upon boxes of data. This influx of information proved to be too much at the outset and companies struggled – and continue to struggle – to check the quality of the technical data they’d inherited. Unknown at the time, the data quality issues present at the outset of these M&As would have lasting effects on current and future data management efforts. In some cases it gave rise to law suites that were settled in millions of dollars.

Early 2000s

Companies started to experiment with the Internet. At that time, that meant experimenting with simple reporting and limited intelligence on the intranet. Reports were still mostly distributed via email attachments and/or posted in a centralized network folder.

I am convinced that it was the Internet that necessitated cleaning technical data and key header information for two reasons: 1) Web reports forced the integration between systems as business users wanted data from multiple silo databases on one page. Often times than not, real-time integration could not be realized without cleaning the data first 2) Reports on the web linked directly to databases exposed more “holes” and multiple “versions for the same data”; it revealed how necessary it was to have only ONE VERSION of information, and that had better be the truth.

The majors were further ahead but for many other E&P companies, Engineers were still integrating technical information manually, taking a day or more to get a complete view and understanding of their wells, excel was the tool moslty. Theoretically, with these new technologies, it should be possible to automate and instantaneously give a 360 degree-view of a well, field, basin and what have you. However, in practice it was a different story because of poor data quality. Many companies started data cleaning projects, some efforts were massive, in tens of millions of dollars, and involved merging systems from many past acquisitions.

In the USA, in addition to the internet, the collapse of Enron in October 2001 and the Sarbanes–Oxley Act enacted in July 30, 2002, forced publicly traded oil and gas companies to document and get better transparency into operations and finances. Data management professionals were busy implementing their understanding of SOX in the USA. This required tightener definitions and processes around data.

Mid 2000s

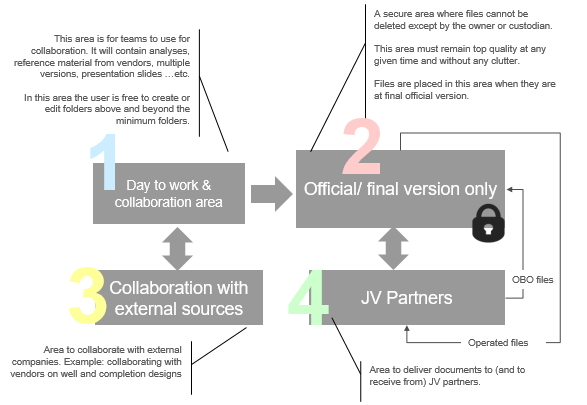

By mid-2000s, many companies started looking into data governance. Sustaining data quality was now in the forefront. The need for both sustainable quality data and data integration gave rise to Well Master Data Management initiatives. Projects on well hierarchy, data definitions, data standards, data processes and more were all evolving around reporting and data cleaning projects. Each company working on its own standards, sharing success stories from time to time. Energetics, PPDM and DAMA organizations came in handy but not fully relied on.

Late 2000s

When working on sustaining data quality, one runs into the much-debated subject of who owns the data? While for years, the IT department tried to lead the “data management” efforts, they were not fit to clean technical oil and gas data alone; they needed heavy support from the business. However the engineers and geoscientists did not feel it was their priority to clean “company-wide” data.

CIOs and CEOs started realizing that separating data from systems is a better proposition for E&P. Data lives forever while systems come and go. We started seeing a movement towards a data management department, separate and independent from IT, but working close together. Few majors made this move in mid 2000s with good success stories others are started in late 2000s. First by having a Data Management Manager reporting to the CIO (and maybe dotted line to report to a business VP) then reporting directly to the business.

Who would staff a separate data management department? You guessed it; resources came from both the business and IT. In the past each department or asset had its own team of technical assistants “Techs” who would support their data needs (purchase, clean, load, massage…etc.) Now many companies are seeing a consolidation of “Techs” in one data management department supporting many departments.

Depending on how the DM department is run, this can be a powerful model if it is truly run as a service organization with the matching sense of urgency that E&P operations see. In my opinion, this could result in cheaper, faster and better data services for the company, and a more rewarding career path for those who are passionate about data.

Late 2008 and throughout 2009 the gas prices started to fall, more so in the USA than in other parts of the world. Shale Natural Gas has caught up with the demand and was exceeding it. In April 2010, we woke up to witness one of the largest offshore oil spill disasters in history. A BP well, Macondo, exploded and was gushing oil.

For companies that put all their bets on gas fields or offshore fields, they did not have appetite for data management projects. For those well diversified or more focused on onshore liquids, data management projects were either full speed or business as usual.

2010 to 2015 ….

Companies that had enjoyed the high oil prices since the 2007 started investing heavily in “digital” oilfields. More than 20 years had passed since the majors started this initiative (I was on this type of project with Schlumberger for one of the majors back in 1998). But now it is more justifiable than ever. Technology prices have come down, systems capacities are up, network reliability is strong, wireless-connections are reasonably steady and more. All have come together like a prefect storm to resurrect the “smart” field initiatives like-never before. Even the small independents were now investing in this initiative. High oil prices were justifying the price tag (multiple millions of dollars) on these projects. A good part of these projects is in managing and integrating real time data steams and intelligent calculations.

Two more trends appeared in the first half of the 2010s:

- Professionalizing the petroleum data management. Seemed like a natural progression now data management departments are in every company. The PPDM organization has a competency model that is worth looking into. Some of the majors have their own models that are tied to their HR structure. The goal is to reward a DM professional’s contribution to business’ assets. (Also please see my blog on MSc in Petroleum DM)

- Larger companies are starting to experiment and harness the power of Big Data, and the integration of structured with unstructured data. Meta data and managing unstructured has become more important than ever.

Both trends have tremendous contributions that are yet to be fully harnessed. The Big Data trend in particular is nudging data managers to start thinking of more sophisticated “analysis” than they did before . Albeit one could argue that Technical Assistants that helped engineers with some analysis, were also nudging towards data analytics initiatives.

In December 2015, the oil price collapses more than 60% from its peak

But to my friends’ disappointment, standards are still being defined. Well hierarchy, while is seems simple to the business folks, getting it all automated and running smoothly across all types and locations of assets will require the intervention of the UN. With the data quality commotion some data management departments are a bit detached from the operations reality and take too long to deliver.

This concludes my series on the history of Petroleum Data Management. Please add your thoughts would love to hear your views.

For Data Nerds

- Data ownership has now come full circle, from the business to IT and back to business.

- The rise of Shale and Coal-bed Methane properties, fast evolution of field technologies are introducing new data needs. Data management systems and services need to stay nimble and agile. The old ways of taking years to come up with a usable system is too slow.

- Data cleaning projects are costly, especially when cleaning legacy data, so prioritizing and having a complete strategy that aligns with the business’ goals are key to success. Starting with well-header data is a very good start, aligning with what operations really need will require paying attention to many other data types, including mealtime measurements.

- When instituting governance programs, having a sustainable, agile and robust quality program is more important than temporarily patching problems based on a specific system.

- Tying data rules to business processes while starting from the wellspring of the data is prudent to sustainable solutions.

- Consider outsourcing all your legacy data cleanups if it takes resources away from supporting day to day business needs. Legacy data cleaning outsources to specialized companies will always be faster, cheaper and more accurate.

- Consider leveraging standardized data rules from organizations like PPDM instead of building them from scratch. Consider adding to the PPDM rules database as you define new ones. When rules are standardized data, sharing exchanging data becomes easier and cost effective.